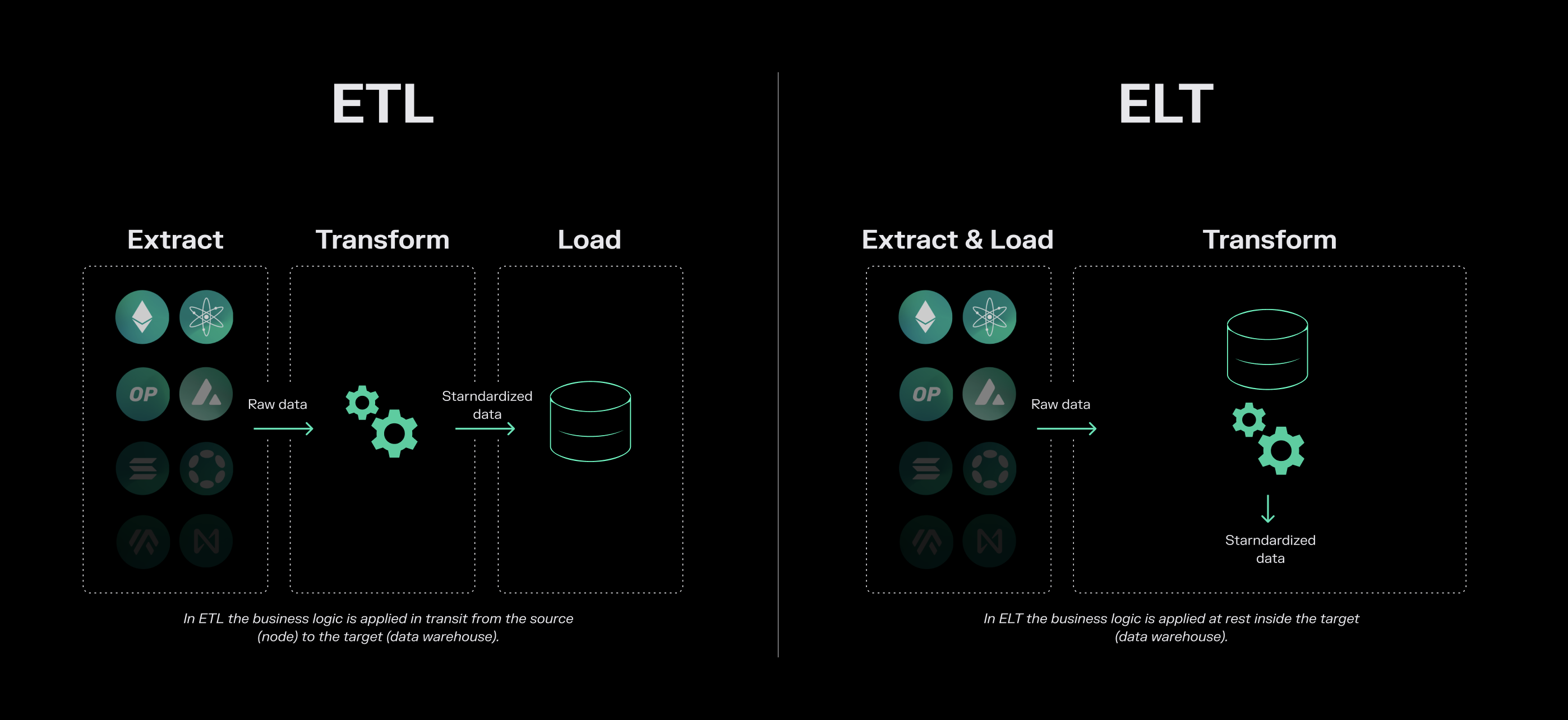

Token Terminal uses an ELT (Extract, Load, Transform) paradigm to process raw blockchain data into standardized metrics. This approach ensures data is transparent, reproducible, and auditable at every stage. In a typical ETL workflow, data is transformed before it is stored. This can work well if the structure of your data is stable. But in our industry, things change constantly in ways that are different from most traditional data pipelines. For example:Documentation Index

Fetch the complete documentation index at: https://tokenterminal.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

- Protocol upgrades Blockchains regularly introduce upgrades that can include breaking changes to data schemas. A protocol might add new transaction types, restructure contract storage, or modify how events are emitted.

- Smart contract deployments Decentralized applications are continuously launching new products or business lines, often across multiple chains. Each new contract introduces additional data that needs to be standardized and integrated.

- Methodology changes Even the meaning of common metrics evolves over time. How you define metrics like fees, active users, or token incentives can change as protocols mature and industry practices develop.

- Traceability Every metric can be linked directly back to the source.

- Reproducibility When new smart contracts are deployed or methodologies change, we can re-process historical data within minutes without waiting days to re-ingest it.

- Scale This approach allows us to maintain standardized metrics across hundreds of blockchains and thousands of applications efficiently.